When we were designing AxisCorePay — a custodial crypto payment platform handling Ethereum ERC-20 and Tron TRC-20 transactions — the hardest question wasn’t about blockchain integration or REST API design. It was this:

How do you authorize a settlement when you can’t fully trust any single actor in your own system, including your own infrastructure?

We weren’t being paranoid. We had read the post-mortems. Every major custodial wallet breach follows the same script: attacker reaches a private key stored in one place. The playbook of “encrypt it and put it in Vault” doesn’t solve the root problem — the key is assembled somewhere. At some point, one process holds it in memory, and that process can be compromised.

This article covers the specific solution we shipped: Shamir’s Secret Sharing (SSS) implemented in Go over , integrated into a bilateral settlement authorization flow. We’ll cover the math, the production code, and the three implementation mistakes that don’t show up in tutorials but will break your security guarantee in the field.

Why standard key management isn’t enough for settlements

We use MPC-TSS (Multi-Party Computation — Threshold Signature Scheme) for the daily transaction signing path. With MPC-TSS, the private key is mathematically never assembled: signing happens across distributed nodes through a multi-round protocol, and no single node ever holds enough information to reconstruct the key. This is the right answer for high-frequency transaction signing.

But for merchant settlements — periodic payouts where both the platform and the merchant must explicitly authorize a transfer — MPC-TSS felt like the wrong tool. Settlements are infrequent, involve human confirmation from both sides, and need to be auditable as a two-party agreement. The complexity of a multi-round cryptographic protocol adds latency and opacity where you actually want simplicity and transparency.

We prototyped a simpler approach first: store the settlement payload in Vault, require two separate service calls to release it. Our security review found the obvious gap: “What if the Vault token is compromised? What if someone with admin access approves both sides?” We didn’t have a mathematical answer — just process controls. Process controls fail.

Shamir’s Secret Sharing gave us a mathematical answer: split the settlement payload such that neither side alone can reconstruct it, with a guarantee rooted in information theory, not operational discipline.

Choosing the right scheme: the comparison we actually ran

Before writing a line of code, we evaluated every viable option:

| Approach | Key property | Why it didn’t fit our settlement case |

|---|---|---|

| Multisig (blockchain-level) | Native chain enforcement | Chain-specific — we support ETH and Tron, no single solution |

| MPC-TSS | Key never assembles | Complex multi-round protocol; adds latency where human confirmation is the bottleneck anyway |

| Shamir SSS | Information-theoretic security, simple | Secret assembles briefly in memory — acceptable for payload verification |

| XOR split (2-party) | Trivial to implement | No threshold: lose one share, secret is gone permanently |

| Dual-key AES wrapping | Familiar pattern | Halving an AES key search space is not information-theoretic security |

Shamir was the right fit for settlements specifically because:

- Information-theoretic security: fewer than shares reveal zero bits about the secret — not computationally hard to break, but mathematically impossible, regardless of adversary compute

- ~200 lines of auditable Go: reviewable in a sitting, testable thoroughly

- Flexible threshold: 2-of-2 for our bilateral case, 2-of-3 for fault-tolerant variants

- Byte-oriented: works on arbitrary-length payloads without encoding overhead

The math: Shamir SSS over

Shamir’s algorithm in plain language

Shamir’s Secret Sharing is a way to “split” a secret — a password, a key, or a safe code — into several pieces and give those pieces to different people. The secret can only be recovered when at least a certain number of pieces are combined. If an attacker has fewer pieces than that threshold, they learn nothing about the secret.

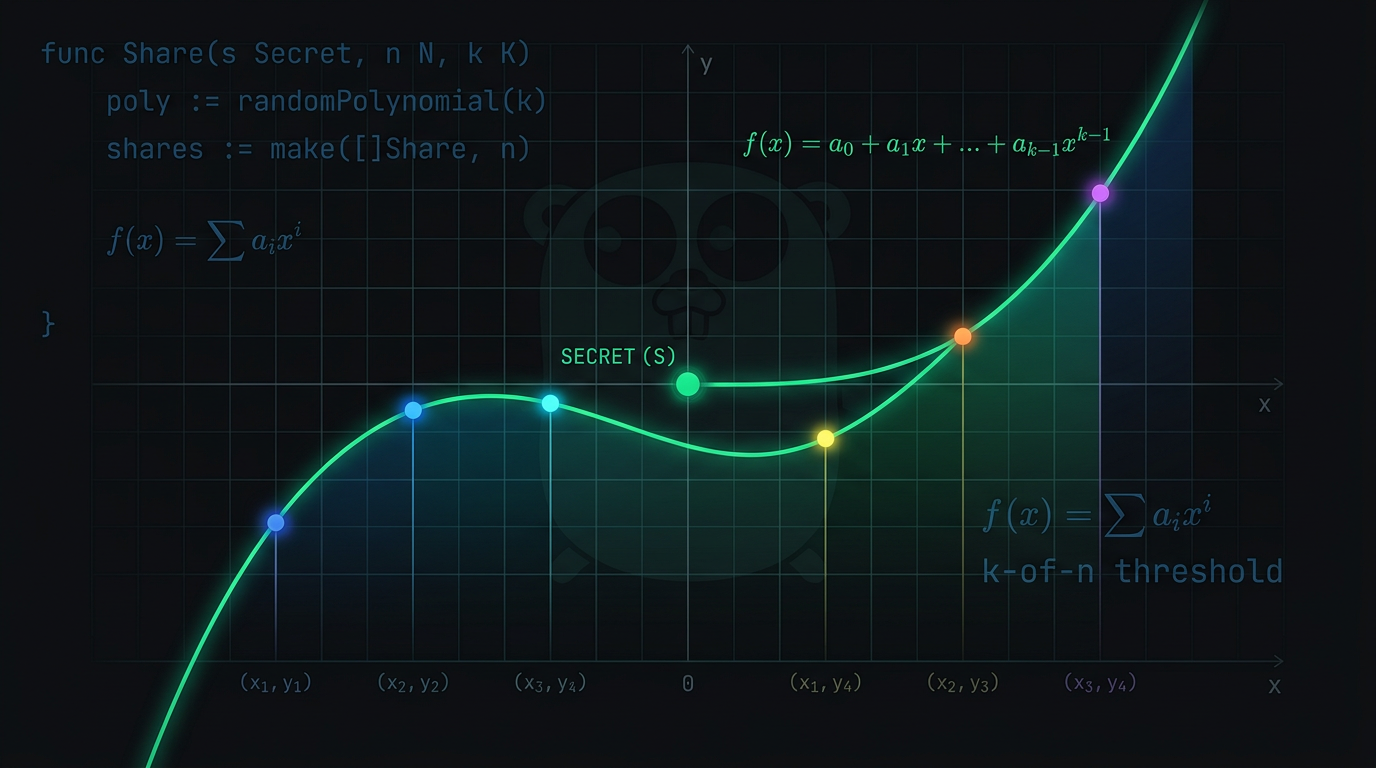

The simple idea. Think of the secret as a point on the coordinate plane. To define a line you need two points; with only one point, infinitely many lines pass through it. Here, the secret is hidden in the coefficients of a polynomial, and the pieces (shares) are points on that polynomial’s curve. The interactive chart and diagram below bring this idea to life.

The math without the jargon.

-

Choose the threshold — how many people must come together to recover the secret. Denote that number by . For example, (any three).

-

Build a polynomial of degree . If , the polynomial has degree 2: The constant term is the secret. The other coefficients are chosen at random (from a cryptographically secure source).

-

Hand out the shares. For each participant pick a distinct (e.g. 1, 2, 3, …) and compute the corresponding from the polynomial. The pair is one share. You can create as many shares as you want, even more than .

-

Reconstructing the secret. With any shares — i.e. points — there is exactly one polynomial of degree passing through them. Its constant term is the secret. This is done with Lagrange interpolation: a formula that recovers the polynomial from the points. It works because a polynomial of degree is uniquely determined by points. With fewer points, there are infinitely many such polynomials, and every possible secret is equally likely.

Concrete example. Suppose the secret is and we want any three of five people to recover it.

- Take a degree-2 polynomial: .

- Pick random coefficients , .

- So the polynomial is .

- Compute shares for :

- : → share

- : → share

- : → share

- : → share

- : → share

If participants 2, 3 and 5 get together, they have , , . Lagrange interpolation recovers the polynomial and thus the constant term . If an attacker only has two points — e.g. and — there are infinitely many degree-2 polynomials through those two points, each with a different constant term. The real secret is one of them, but guessing it is impossible; all possibilities are equally likely. That’s the information-theoretic guarantee.

Bytes in the machine: text and images are just numbers. A computer doesn’t “see” letters or pixels directly. To it, any data — a word, an image, a key — is just a sequence of numbers (bytes). Each character has a numeric code (e.g. 0–255 in ASCII); each pixel is stored as numbers. So applying Shamir to “characters” or to a signing payload is the same idea: treat the secret as a list of bytes and run the scheme once per byte.

Example: the secret is the word “CAT”. In memory that’s three bytes: C = 67, A = 65, T = 84. We want any 3 people to recover it (), so for each byte we build a separate degree-2 polynomial.

- Byte 1 (67): pick random coefficients, e.g. , . Polynomial for this byte: .

- Byte 2 (65): another polynomial with constant term 65 and different random , .

- Byte 3 (84): a third polynomial with constant term 84.

Now compute shares for participants . Participant #1 () gets: for the first byte , for the second byte some , for the third some . So their share is the triple — one value per byte. Participant #2 gets the three values at , and so on.

When any three participants combine, they take their three shares and, for each byte position, reconstruct the unique degree-2 polynomial through three points and read off the constant term. That gives 67, 65, 84 — the original bytes — i.e. the word “CAT”. In AxisCorePay we do exactly this for a multi-byte signing payload: one polynomial per byte, same threshold , and each share is a byte array of the same length as the secret.

What about the “modulo” trick? For the scheme to work cleanly in code, we do arithmetic not in the integers but in a finite field — either modulo a large prime (in classic descriptions) or, as we do in AxisCorePay, in : 256 elements, one per byte. That keeps every value in a fixed range, avoids rounding and overflow, and keeps shares as compact, random-looking bytes. The intuition above is unchanged.

Bottom line. Shamir’s scheme lets you choose the threshold flexibly: e.g. 5-out-of-10, 2-of-3, or 10-of-10. The polynomial structure guarantees that with fewer than shares you get zero information about the secret — which is why it’s used in key backup, crypto custody, and access control.

Formal notation (our implementation)

In our implementation the secret and coefficients live in . We write:

- — secret byte (the constant term of the polynomial)

- — random coefficients in , sampled from

crypto/rand - — threshold: minimum shares required to reconstruct

- — evaluation point: distinct non-zero values

GF = Galois Field — a finite algebraic structure named after French mathematician Évariste Galois (1811–1832), who laid the foundations of group theory before dying in a duel at 20.

A Galois Field has a fixed number of elements and defines addition and multiplication such that every result stays within the field — no overflow, no fractions, no elements outside the set.

GF(2⁸) specifically has exactly 256 elements — one per byte value (0x00 to 0xFF). The two operations:

- Addition = bitwise XOR (e.g.,

0xCA ⊕ 0x55 = 0x9F— always one byte) - Multiplication = log/exp table lookup using the irreducible polynomial

This is the same field AES uses internally. The tables are well-studied, constant-time, and need no big-integer arithmetic — just 256-entry byte arrays.

Arithmetic runs in — a finite field of 256 elements, where each element is exactly one byte. Addition is bitwise XOR. Multiplication uses precomputed log/exp tables derived from the irreducible polynomial .

Processing is byte-by-byte: for a -byte payload, run the split independently for each byte using a freshly sampled polynomial. The result is shares each of length . Reconstruction applies Lagrange interpolation byte-by-byte.

Visualizing the polynomial

The interactive chart below shows the polynomial defined by your chosen threshold and secret. Shares are the colored dots at . The green diamond at is the secret.

Click “Show: t−1 shares → infinite secrets” to see the information-theoretic guarantee in action: with one share removed, multiple polynomials are consistent with the remaining data — and the value at is completely undetermined.

Why information-theoretic security is different

Most cryptographic systems provide computational security: breaking them is infeasible with current hardware, but not impossible in principle. Shamir SSS provides something categorically stronger.

This property holds regardless of adversary compute. Even an attacker with infinite CPU, t−1 shares are consistent with every possible secret value — all with equal probability. There is literally nothing to compute.

Try it: interactive threshold demo

Before diving into the implementation, play with the visualizer. Enter a secret, set and , click shares to toggle them in and out of a recovery attempt:

Notice what happens when you select fewer than shares: you recover garbage, not a partial secret. That’s the core guarantee — information-theoretic security means a computationally unbounded adversary with shares learns nothing. This is categorically stronger than computational security (RSA, AES) where an adversary could break it given enough time.

Implementation

Step 1: arithmetic

// Addition and subtraction in GF(2^8) are identical: bitwise XOR.

func gf256Add(a, b byte) byte {

return a ^ b

}

// Multiplication via precomputed log/exp tables.

// Tables derived from the irreducible polynomial x^8+x^4+x^3+x+1 (0x11B) —

// the same used by AES, which means these tables are well-studied.

func gf256Mul(a, b byte) byte {

if a == 0 || b == 0 {

return 0

}

logSum := int(logTable[a]) + int(logTable[b])

if logSum >= 255 {

logSum -= 255

}

return expTable[logSum]

}

func gf256Div(a, b byte) byte {

if a == 0 {

return 0

}

if b == 0 {

panic("division by zero in GF(2^8)")

}

logDiff := int(logTable[a]) - int(logTable[b]) + 255

if logDiff >= 255 {

logDiff -= 255

}

return expTable[logDiff]

}The 256-entry exp/log tables are a one-time startup cost. Every field operation becomes O(1) byte lookups — no big integers, no arbitrary-precision arithmetic, no math/big.

GF(2⁸) arithmetic — try it live

Move the sliders to see how every operation always stays within a single byte, and why regular integer addition breaks this property:

Step 2: Split

type Share struct {

X byte // x-coordinate (1..n, never 0 — f(0) is the secret)

Data []byte // one byte per secret byte

}

func Split(secret []byte, threshold, totalShares int) ([]Share, error) {

if threshold < 2 || threshold > totalShares {

return nil, fmt.Errorf("invalid threshold: t=%d n=%d", threshold, totalShares)

}

shares := make([]Share, totalShares)

for i := range shares {

shares[i] = Share{X: byte(i + 1), Data: make([]byte, len(secret))}

}

for byteIdx, secretByte := range secret {

poly, err := newRandomPolynomial(secretByte, threshold)

if err != nil {

return nil, err

}

for shareIdx := range shares {

shares[shareIdx].Data[byteIdx] = poly.evaluate(shares[shareIdx].X)

}

}

return shares, nil

}The leading coefficient must not be zero. If it is, the effective polynomial degree drops from to something lower, and the security threshold silently collapses — shares may become sufficient to reconstruct the secret. Always re-sample:

func newRandomPolynomial(secret byte, threshold int) (*polynomial, error) {

p := &polynomial{coefficients: make([]byte, threshold)}

p.coefficients[0] = secret

randomBytes := make([]byte, threshold-1)

if _, err := rand.Read(randomBytes); err != nil {

return nil, err

}

// Re-sample until leading coefficient is non-zero.

// Expected attempts: 256/255 ≈ 1.004 — virtually always one pass.

for randomBytes[len(randomBytes)-1] == 0 {

if _, err := rand.Read(randomBytes[len(randomBytes)-1:]); err != nil {

return nil, err

}

}

copy(p.coefficients[1:], randomBytes)

return p, nil

}Use crypto/rand. Not math/rand. The entire security model depends on the coefficients being cryptographically unpredictable.

Step 3: Combine (Lagrange interpolation at )

- — the share value (polynomial evaluation at point )

- — x-coordinates of the selected shares

- All arithmetic in : subtraction XOR addition

func Combine(shares []Share) ([]byte, error) {

if len(shares) < 2 {

return nil, fmt.Errorf("need at least 2 shares")

}

// Duplicate x-coordinates cause division by zero in Lagrange interpolation.

seen := make(map[byte]bool)

for _, s := range shares {

if seen[s.X] {

return nil, fmt.Errorf("duplicate share x-coordinate: %d", s.X)

}

seen[s.X] = true

}

result := make([]byte, len(shares[0].Data))

for b := range result {

result[b] = interpolateAtZero(shares, b)

}

return result, nil

}

func interpolateAtZero(shares []Share, byteIdx int) byte {

result := byte(0)

for i, si := range shares {

basis := byte(1)

for j, sj := range shares {

if i == j {

continue

}

// L_i(0) = ∏(j≠i) (0 - x_j) / (x_i - x_j)

// In GF(2^8): subtraction == XOR

num := sj.X

den := gf256Add(si.X, sj.X)

basis = gf256Mul(basis, gf256Div(num, den))

}

result = gf256Add(result, gf256Mul(si.Data[byteIdx], basis))

}

return result

}Step 4: Settlement integration — 2-of-2 bilateral authorization

This is where the cryptographic primitive meets the product requirement. The full settlement flow:

func (s *SettlementService) CreateSettlement(

ctx context.Context,

params CreateSettlementParams,

) (*Settlement, error) {

payload := generateSigningPayload(params)

shares, err := shamir.Split(payload, 2, 2)

if err != nil {

return nil, fmt.Errorf("shamir split: %w", err)

}

// Each share encrypted with its recipient's dedicated AES-256-GCM key.

// Defense-in-depth: even if a share leaks from the database,

// it's ciphertext without the corresponding key.

adminKey, _ := keystore.GenerateKey()

merchantKey, _ := keystore.GenerateKey()

adminShareEnc, _ := keystore.EncryptWithKey(shares[0].Data, adminKey)

merchantShareEnc, _ := keystore.EncryptWithKey(shares[1].Data, merchantKey)

s.balances.DebitBalance(ctx, params.MerchantOrgID, params.Amount)

return s.repo.CreateSettlement(ctx, &Settlement{

AdminShareEncrypted: adminShareEnc,

MerchantShareEncrypted: merchantShareEnc,

AdminShareKey: adminKey,

MerchantShareKey: merchantKey,

AdminShareX: shares[0].X,

MerchantShareX: shares[1].X,

SigningPayload: payload,

Status: StatusPendingConfirmation,

})

}When both parties confirm, the system reconstructs and verifies before broadcasting:

func (s *SettlementService) executeSettlement(ctx context.Context, stl *Settlement) {

adminShare, err := keystore.DecryptWithKey(stl.AdminShareEncrypted, stl.AdminShareKey)

if err != nil {

s.failSettlement(ctx, stl, "admin share decryption failed")

return

}

merchantShare, err := keystore.DecryptWithKey(stl.MerchantShareEncrypted, stl.MerchantShareKey)

if err != nil {

s.failSettlement(ctx, stl, "merchant share decryption failed")

return

}

reconstructed, err := shamir.Combine([]shamir.Share{

{X: stl.AdminShareX, Data: adminShare},

{X: stl.MerchantShareX, Data: merchantShare},

})

if err != nil {

s.failSettlement(ctx, stl, "shamir combine failed")

return

}

if !constantTimeEqual(reconstructed, stl.SigningPayload) {

s.failSettlement(ctx, stl, "payload integrity check failed")

return

}

s.broadcast(ctx, stl, reconstructed)

}An early-exit byte comparison leaks timing information about where the reconstructed payload first differs from the original. Over a sufficient number of measurements, this leaks partial information about the secret. Use subtle.ConstantTimeCompare from the Go standard library, or implement the XOR-accumulator pattern:

func constantTimeEqual(a, b []byte) bool {

if len(a) != len(b) {

return false

}

result := byte(0)

for i := range a {

result |= a[i] ^ b[i]

}

return result == 0

}The Go compiler will not optimise away this loop. bytes.Equal makes no such guarantee.

Shamir’s information-theoretic guarantee holds in isolation. In production, shares sit in a PostgreSQL row alongside metadata that may hint at their context — settlement amounts, merchant IDs, timestamps. AES-256-GCM encryption per-party adds two things: (a) confidentiality if the database is exfiltrated, and (b) authentication — you know each share hasn’t been tampered with before combining. The overhead is negligible. The risk reduction is not.

Production results from AxisCorePay

What we measured in production

- Split + Combine latency: < 1 ms for payloads up to 10 KB — negligible compared to human confirmation round-trips

- SSS core: ~200 lines of Go, test coverage 100% — round-trip, threshold edge cases, duplicate x-coordinates, empty input

- Settlement service integration: ~400 additional lines including key management and audit logging

- Risk profiles: 2-of-2 for standard withdrawals; 2-of-3 (platform + merchant + compliance) for large settlements above a configurable threshold

- Zero incidents: No settlement has been executed without both authorized signatures in production

Security posture change:

| Metric | Before (Vault-stored key) | After (Shamir 2-of-2) |

|---|---|---|

| Compromises to steal | 1 — Vault access | 2+ independent parties |

| Info from one share | Full decryption key | 0 bits (proven) |

| Insider threat | Requires admin only | Requires collusion |

| Audit trail | Server logs | Logs + cryptographic commitment |

Key takeaways

Five things we’d tell ourselves before starting

- GF(2⁸) is the right field. One element per byte, addition is XOR, multiplication is lookup tables. No big integers, no encoding overhead. The same field AES uses — the exp/log tables are battle-tested.

- Check the leading coefficient. A silently-zeroed leading coefficient drops polynomial degree and makes the security threshold a lie. Always re-sample. The expected cost is 256/255 ≈ 1 iteration.

- Constant-time comparison is not a nice-to-have. Financial systems are timing-attack targets. Use

subtle.ConstantTimeCompare. - Encrypt shares at rest, unconditionally. AES-256-GCM per-party adds authentication and defense-in-depth at near-zero cost.

- Shamir is for data, MPC-TSS is for keys. SSS assembles the secret in memory — fine for transaction payload verification with immediate zeroing. For private keys that must never assemble, use MPC-TSS. Use both in the same system where appropriate.

FAQ

What is Shamir’s Secret Sharing and how does it differ from encryption?

Encryption protects a secret using computational hardness — breaking it is infeasible but theoretically possible given enough computation. Shamir SSS provides information-theoretic security: shares reveal literally zero bits of information, regardless of computational power. There is nothing to “break.”

When should I use Shamir SSS vs MPC-TSS for key management in Go?

Use Shamir when the secret can briefly assemble in memory for verification — e.g., a settlement payload that is immediately zeroed after use. Use MPC-TSS when the key must never assemble anywhere, such as for long-lived private keys controlling blockchain wallets.

Are the table-lookup operations constant-time?

Array lookups with computed indices are constant-time in Go (no branch prediction, no cache side-channels for small 256-element tables on modern CPUs). The explicit constant-time requirement applies to the final equality check, not the field arithmetic.

What threshold should I choose for a two-party payment authorization?

2-of-2 enforces bilateral confirmation with no unilateral override — use it when both parties must always be present. 2-of-3 (add a compliance or recovery key as the third share holder) provides fault tolerance if one party is temporarily unavailable, while still requiring two confirmations. Avoid 2-of-2 without a key recovery ceremony if permanent loss of one party would lock funds.

Is there a production-ready Go library for Shamir SSS?

HashiCorp Vault uses Shamir internally for Vault unseal key splitting, and the package is importable. The implementation above is written for transparency — for production use, prefer hashicorp/vault/shamir or a well-audited alternative rather than rolling your own field arithmetic.

We built AxisCorePay — a production multi-chain crypto payment platform using exactly these patterns. See the full case study →

Related pages on this site

- AxisCorePay Case Study — full architecture, DTES modes, and production metrics

- FinTech Engineering topic hub — cryptography, payments, and security architecture

- Applied AI services — how we build production ML systems